Market Validation Examples for Founders Testing Demand

last updated: May 15, 2026

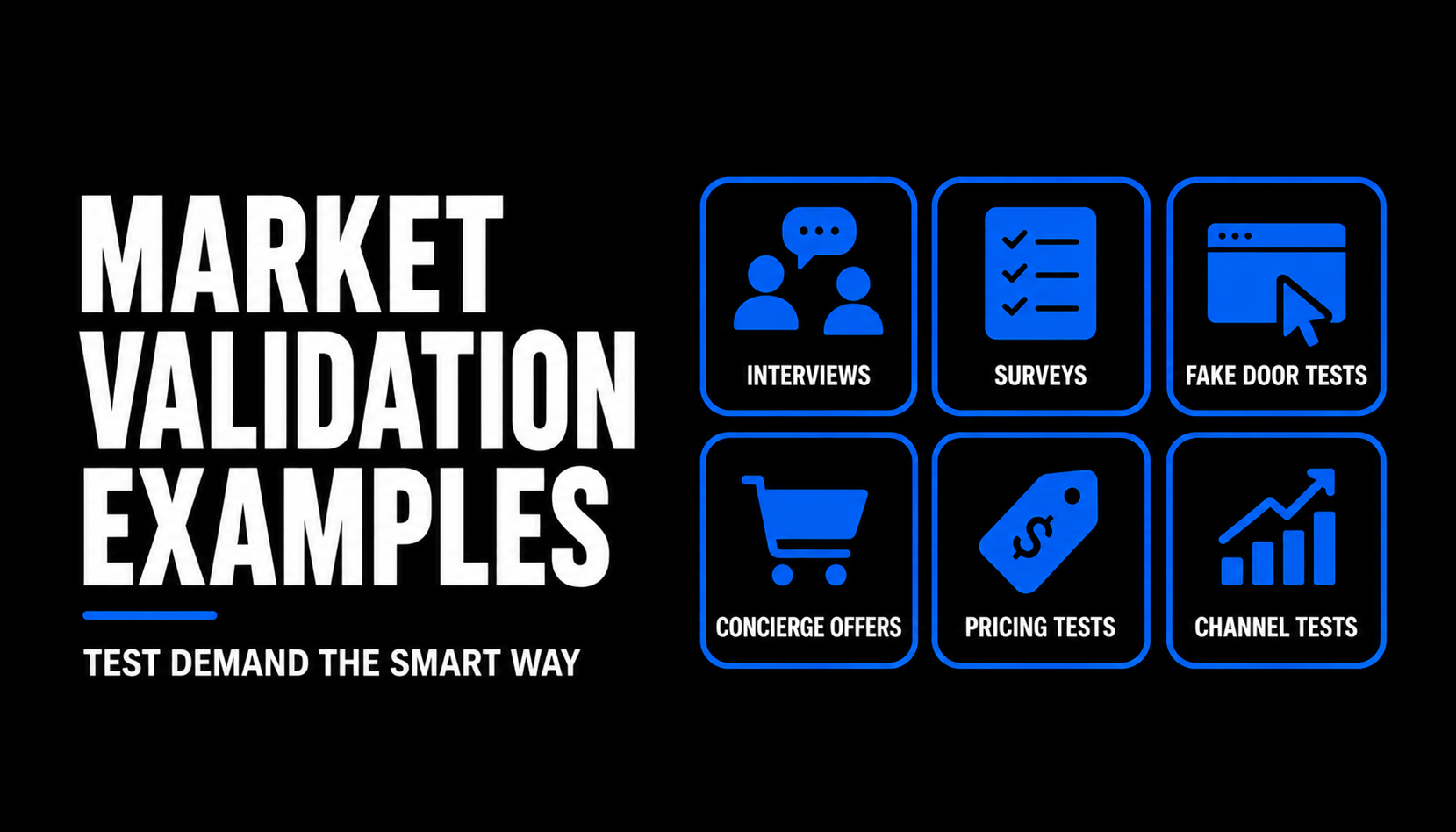

Market validation examples are useful only when they match the risk you are actually testing. A founder with no clear buyer should not run the same test as a founder with a waitlist, a prototype, or pricing resistance. Use this guide to compare startup market validation examples by assumption, stage, signal strength, and the next decision each test should unlock.

TL;DR: Pick the test for the riskiest assumption

Market validation is not one activity. The right method depends on whether you need to test the problem, buyer urgency, offer clarity, pricing, channel demand, or willingness to commit.

How to use the table: Find your riskiest assumption first, then choose the lowest-cost test that can produce a credible next decision.

- Use interviews and surveys when you need to understand the problem and buying context before building.

- Use smoke tests, fake doors, and concierge offers when you need behavior instead of opinions.

- Treat weak signals, such as compliments or casual signups, as prompts for stronger testing rather than proof of demand.

How to use the table: Find your riskiest assumption first, then choose the lowest-cost test that can produce a credible next decision.

Core Definitions

- Market validation. Evidence that a specific customer segment has a painful enough problem, recognizes it, and may take action to solve it.

- Assumption tested. The specific belief your startup needs to prove or disprove, such as "buyers care," "buyers will pay," or "this channel can create demand."

- Signal strength. How much confidence a test should create. As a practical heuristic, a paid commitment is usually stronger than a survey answer, and a qualified survey answer is usually stronger than a founder's guess.

- Failure mode. The common way a validation method can mislead you, such as biased respondents, vanity signups, unclear copy, or testing the wrong audience.

- Best next step. The action the founder should take after the test, such as revising the segment, running discovery, testing pricing, or building a small pilot.

Market validation examples by risk type

Start by naming the riskiest assumption in the business right now. If you are still unclear on the whole validation sequence, use a broader business validation plan before choosing one example below. If you already know the risk, pick the matching test and define what decision you will make before you run it.

Founder Scenarios

- Pre-product founder with a broad idea: The riskiest assumption is not pricing yet. It is whether the problem is real for a narrow segment. Start with discovery interviews, then use a survey only to check whether the pattern exists beyond the first conversations. This is closest to early business idea validation.

- Founder with a clear problem but no proof of urgency: The riskiest assumption is whether people will take action. Run a landing page smoke test with one audience, one promise, and one meaningful action. A waitlist can help, but a request for a call, pilot, or workflow review is usually a stronger signal.

- Founder with users asking for many features: The riskiest assumption is priority, not general interest. A fake-door test can compare intent across features, but it should be handled carefully so users are not tricked or frustrated. Use it to learn what deserves deeper validation, not to pretend the feature exists.

- Founder selling B2B with long buying cycles: The riskiest assumption may be whether the buyer can move internally. A paid pilot, letter of intent, or scheduled implementation conversation is usually more useful than verbal enthusiasm. Treat procurement steps, security questions, and stakeholder introductions as part of the signal.

- Founder seeing survey interest but no conversion: The riskiest assumption is likely offer strength or audience quality. Surveys can reveal language and patterns, but they can overstate intent because answering a question is easier than changing behavior. Move to a behavior-based test before building.

Decision Rules

- If you need language, context, and problem detail, use interviews.

- If you need breadth after interviews, use a survey.

- If you need evidence that the promise gets attention, use a smoke test.

- If you need to compare feature demand, use a fake-door test.

- If you need proof that the outcome matters, run a concierge pilot.

- If you need business model evidence, ask for money, budget, approval, or a signed pilot scope.

- If you need channel evidence, test one audience and one message before scaling outreach.

What Counts as Stronger Evidence

- Stronger: payment, signed pilot scope, repeated use, stakeholder introduction, calendar commitment, migration effort.

- Medium: qualified demo request, detailed objection, targeted signup, feature click from an active user, survey pattern from the right segment.

- Weaker: compliments, social likes, generic waitlist joins, friends saying they would use it, survey answers from people who are not buyers.

Use research carefully. The Nielsen Norman Group's 5-user usability testing article can be useful for product usability learning, but it is not proof of market demand (Nielsen Norman Group on 5-user usability testing). For demand validation, customer development is commonly associated with testing assumptions with customers outside internal planning (Steve Blank on customer development).

Survey and interview answers can be distorted by question wording, respondent selection, and measurement error. Treat them as inputs for better follow-up, not as final proof of purchase intent.

Sample math: In a hypothetical smoke test, a founder spends $300 on targeted traffic. 600 visitors arrive, 42 click the primary offer, and 7 book a call. That is a 7% visitor-to-click rate and a 1.2% visitor-to-call rate. Those numbers are not universal benchmarks; the useful comparison is whether the audience, offer, and call quality justify a stronger test, such as a pilot conversation.

Will business idea validation actually get you to first customers?

Market validation examples can help you choose the right test, but they do not create demand by themselves. The founder's job is to identify the riskiest assumption at the current stage and run the smallest credible test that can change the next decision.

The mistake is treating validation as a checklist: interviews, then survey, then landing page, then build. That sequence can work, but only if each step answers the real uncertainty. If your problem is weak, a smoke test will not fix it. If your audience is wrong, a survey can make the wrong answer look precise.

First customers often come from compounding evidence: a sharp segment, a painful problem, a credible offer, and a commitment path that asks for more than praise. Use these examples to avoid overbuilding, but keep moving toward behavior: calls booked, pilots scoped, budgets discussed, and real usage observed.

This is why I built Traction OS. Fix your foundation before you launch.

The mistake is treating validation as a checklist: interviews, then survey, then landing page, then build. That sequence can work, but only if each step answers the real uncertainty. If your problem is weak, a smoke test will not fix it. If your audience is wrong, a survey can make the wrong answer look precise.

First customers often come from compounding evidence: a sharp segment, a painful problem, a credible offer, and a commitment path that asks for more than praise. Use these examples to avoid overbuilding, but keep moving toward behavior: calls booked, pilots scoped, budgets discussed, and real usage observed.

This is why I built Traction OS. Fix your foundation before you launch.

FAQ

- You:What is the best market validation example for a first-time founder?Guide:Start with problem discovery interviews if you do not yet know the buyer, pain, current workaround, and buying trigger. A landing page can feel faster, but a page with weak positioning mainly tests weak positioning.

- You:Are surveys good market validation?Guide:Surveys are useful for checking patterns, language, and segmentation after you have done enough qualitative discovery to ask better questions. They are limited proof of purchase intent unless respondents are qualified buyers and the questions avoid hypotheticals.

- You:Is a waitlist enough to validate demand?Guide:Usually, treat a waitlist as a light signal rather than a final answer. It becomes more useful when the audience is targeted, the promise is specific, and the next step asks for a stronger action such as a call, pilot, payment, or workflow review.

- You:How do I know when to stop validating and start building?Guide:Build when the next unknown cannot be answered credibly without a product, prototype, or manual version. If you still do not know who the buyer is, why the problem matters, or what commitment they will make, keep validating before building more.

No-BS guides